The Trust Problem With AI Agents

If you're a developer, it's likely you've used an AI tool. And if you've used an AI tool, you've probably had a moment where you got an output you didn't expect. I know I have. That output might be a diff that touched a file you didn't mean to edit, or a commit in your git history that wasn't supposed to be pushed, or an instruction that shouldn't have been written. I've even followed a generated tutorial that led me astray!

When I've been there myself, I've fully gaslit myself into thinking that I did the wrong thing. Now, I do think that this type of interaction happens less with improved models and tooling. But these tools (by design, unfortunately) are just not that good at communicating when they're incorrect unless you point it out. So, you have issues that you didn't see coming, and you have to anticipate those issues existing when you don't fully trust your tools.

That communication and output from tools just hasn't been "it" yet.

Why developers can't entirely rely on agents

Trust in our tools comes from reliable, predictable outputs. This goes for everything. If you had the most fancy air fryer in the world with all the bells and whistles, but it only cooked your food half the time, you wouldn't care as much about its capabilities because it didn't reliably use them. If you bought a super powerful battery that claimed to charge your phone in 10 minutes, 30% of the time... is it really powerful?

Capability is exciting (and often what we look at when we look into switching to a brand new tool or buy a new thing), but the predictability and reliability is what will keep you using a tool over time.

We as an industry gave coding agents and AI tools a ton of capabilities... and now we trust them less. Developers don't trust agents much for a few reasons.

Culprit 1: Transparency

If you don't watch every single step of your coding agent's reasoning (if your tool even surfaces it), you often end up with a changelog that tells you what an agent did and not necessarily why. You, as the developer, end up getting stuck defending decisions you didn't make, and working from the output of a black box.

When you can see the reasoning, you can stop the bad assumption early, and steer the ship a bit. But when you can't (or if you're orchestrating multiple agents at once), you might catch those decisions late in a diff (or worse, in production).

An example of my own is when I had an app with users, and I wanted to be able to contact users with verified emails. I used some AI tooling and had a functioning feature that I was happy with, but later when I went to review the code, it was gross:

const result = users.filter(u => u.active && u.emailVerified).map(u => ({ id: u.id, name: u.name, email: u.email }));

I mean, yes, this functions, but... what am I even looking at? Yes, I can read it closely and figure it out, but that slows down maintenance over time. If I were able to steer earlier (or write this myself), I would've chosen something more legible, like:

const isReadyToContact = (user) => user.active && user.emailVerified;

const getContactInfo = (user) => ({

id: user.id,

name: user.name,

email: user.email,

});

const contactableUsers = users.filter(isReadyToContact).map(getContactInfo);

The agent made choices that might have been technically defensible, sure, but it's not what I would have chosen. This is a small example, but at scale, maintaining this type of application should be the experience that I want, where anyone (a teammate, my future forgetful self, or an agent) can jump in and know what's going on, without having to parse too deeply.

If your tools don't tell you what's going on, then you don't know what you're maintaining, or why you're maintaining it a certain way.

Culprit 2: Scope creep

I can't tell you how many times an agent has gone against my instructions and ended up "moving my cheese" and touched files that I didn't expect it to. And the thing is, some of those changes might be good! Needed, even! That's what makes this kind of scope hard to condemn and push back on in a "black and white" way. It's not that simple.

The agent isn't malicious and pulling a Captain Phillips and saying, "I am the captain now," it just optimizes for "done, and done very fast," rather than "done, exactly the way you asked."

When a change goes outside of scope, it makes code review harder. I've gotten so many pull requests on my open source projects that I've had to close because even though they solved the issue they were claiming, they went and fixed more than the exact specifications they should have changed. It means as a maintainer, I have to audit more of the diff, rather than just checking the one thing I expect. That mental lift is frustrating.

With AI tools, that mental lift scales a ton, because they're just shipping more, faster. Agents are really enthusiastic contributors to your projects, and if you can't rein them in, the review process constantly includes the auditing step of what actually was changed, rather than just a quality check.

Culprit 3: No shared reasoning

There are some AI tools that share how they reason about things, to an extent. But you can't sit inside its brain any more than you can in that of your fellow human programmer's. You can read the output it made and the diff it created, but you're reviewing work without a lot of context.

We often say "context is king" when we talk about what we feed to AI tools, but it goes both ways! The tools should give us context too, the developers who are ultimately responsible for the output.

With a human collaborator, you might have other context, like a DM on Slack or a call or documentation that gives you a lot more to work with, mentally. With AI tools... you basically have the code and the chat window. It's fine but not good enough, in my opinion.

All together... is a bit of a mess

When you pull in transparency, scope creep, and a lack of shared reasoning all together, they're symptoms of the same design gap that optimizes for completion (and not collaboration).

That collaboration layer, I think, is what will make developers more willing to trust the tools and reliably improve the output they get from them.

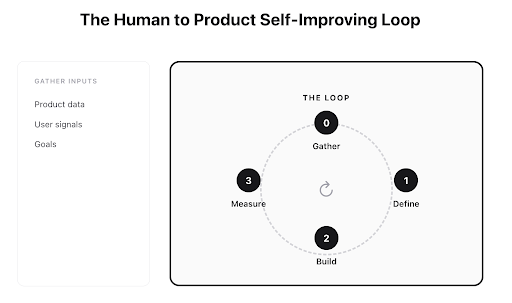

Humans need to fit in the loop in more steps of the process. There's a cheesy proverb that I actually think applies well here, "if you want to go fast, go alone, if you want to go far, go together." Agents move alone, often, by design. We need to move with them to trust them more.

What Human-in-the-Loop Actually Means

If I had a nickel for how many times I've heard "human-in-the-loop" in a meeting... anyway, that term has really lost all meaning. Real human control requires:

- Seeing the plan before executing on it (not just a summary after the fact)

- Ability to modify the plan, not just a thumbs up or down (binary choice is not control)

- The agent reviewing itself first (it needs to check itself before it ~~wrecks itself~~ hands things off to you) The team behind Jules by Google Labs is experimenting on a new set signal → give context → review/approve → execute system that will try to do this, empowering the decision makers for product builders to be that human.

I do like the idea of reviewing and approving things as separate steps, and executing only after those steps have been completed. It assigns roles well and means that it, theoretically, shouldn't go rogue with these guardrails in place.

But... will it solve the trust issue?

The Honest Cliffhanger

I don't know if I have a proper verdict for you yet, dear reader. I'm getting access to Jules myself, and I'm going to report back on whether it holds up in practice!

This is the first post of a series on this subject, and I'm writing about this live with you as I figure things out along the way. I'm not going to tell you what to think, because I am still forming my own opinions!

So, that being said... smash that subscribe button follow along! Join the waitlist if you'd like to join me in this journey.

I personally am hoping that this approach might be a solution to the trust problem.

...maybe it will be? 👀